How the ARISE Framework™ Operationalizes Governance for AI

March 20, 2026

Dr. Joshua Scarpino

Most organizations have entered the AI era with policies. They wrote acceptable-use documents, formed AI committees, and published principles. Some created governance pages on their intranet. Then they deployed AI.

The gap between those two moments, policy and deployment, is now where agentic AI is exposing the limits of traditional governance. And it is doing so quickly.

Agency is not a feature, it is the transfer of decision rights. When AI systems move from generating answers to initiating actions, the governance question is no longer “Is the model accurate?” It becomes “Who is accountable when the system acts?” Policies written for the former state cannot govern the latter.

The ARISE Framework™ was built to help close that gap.

The Agentic AI Governance Problem

Agentic AI systems that pursue goals, chain tasks, call APIs, and execute workflows without human direction do not operate the way traditional AI governance programs assumed. Traditional AI governance was focused on models that produce outputs. Systems generate predictions, classifications, or text; humans review the results and then decide what action to take. Agentic AI removes that human review point from the middle of the loop.

The operational consequences are not theoretical. McKinsey research shows that 80 percent of organizations have already encountered risky behavior from deployed AI agents, with risks ranging from data exfiltration and scope creep to coordinated multi-agent failures triggered by a single data poisoning event.¹ A procurement, cloud infrastructure, and a customer-facing agent all trained on shared internal knowledge represent a single attack surface spanning operations, finance, and customer trust.

Regulatory compliance becomes exponentially more complex when AI systems can take thousands of actions daily without human review. The recent GTG1002 cyberattack disclosed by Anthropic in November 2025 serves as a critical warning: repurposed Claude Code working in synchronized clusters of autonomous orchestrators and agents, executed 80-90% of tactical operations of the cyber espionage at “physically impossible request rates”.²

As NACD’s Directorship Magazine has noted, traditional compliance approaches that rely on periodic audits, approval workflows, and after-the-fact review are insufficient for systems that operate in real time across multiple jurisdictions and regulatory domains.3 The document trail that satisfied a SOC 2 auditor last year will not satisfy one reviewing new controls for agentic deployments today. Policies that pass review and impose administrative controls do not constrain what an autonomous agent can do within live production systems. This governance gap is what ARISE was built to bridge.

What is ARISE

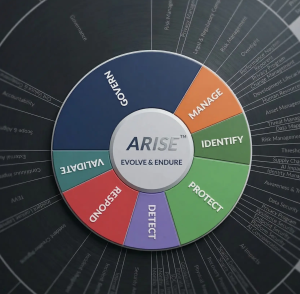

The ARISE Framework™ is a 7-domain assurance model, GOVERN, MANAGE, IDENTIFY, PROTECT, DETECT, RESPOND, and VALIDATE, developed by Assessed Intelligence to operationalize secure and responsible technology at enterprise scale. Unlike many current frameworks that define what governance should look like in isolation, ARISE specifies what governance should do when unified: discrete, auditable controls mapped to regulatory requirements, other frameworks, assigned priorities, and connected to evidence and audit requirements.

The ARISE Framework contains structured requirements across every domain. For agentic AI, four domains carry the heaviest operational weight.

GOVERN: Making Policy Executable

The GOVERN domain transforms organizational intent into operational constraint. For agentic AI, policies governing autonomous decision-making must be embedded at the system level, not housed in documents that agents cannot read and are not designed to enforce.

ARISE GOVERN controls require organizations to define the authority boundaries of AI agents before deployment. What systems can the agent access? What actions require human authorization? What constitutes an escalation trigger? These are formal control requirements with evidence expectations, not rhetorical questions to be addressed in a future policy revision.

NACD’s Directorship Magazine identifies what forward-thinking organizations are calling embedded compliance, building regulatory requirements directly into an AI system’s design and operation rather than layering policy documents on top of deployed systems.3 ARISE codifies this as a control obligation, not a best practice. The framework distinguishes between having a governance policy and operating a governed system; only the latter produces assurance.

MANAGE: Lifecycle Accountability for Autonomous Systems

The MANAGE domain governs the full lifecycle of AI assets including inventory, classification, ownership, and retirement. For agentic AI, this domain is where most organizations currently have the largest gaps.

Governance must assign named individuals: someone who monitors behavior, someone who approves high-impact actions, and someone who intervenes when outcomes deviate from intent. ARISE MANAGE controls enforce exactly this structure. Every deployed agent must have an identified owner for that system. Every agent-to-agent workflow must be inventoried. Every integration point must be scoped and documented.

This is not administrative overhead; it is the minimum viable accountability structure for autonomous systems. IBM’s research on agentic governance identifies a critical transition point: when oversight of control logic moves from “human in the loop” to “human on the loop,” the accountable party is the one who signs off on the use of agentic AI and its automated governance systems.4 ARISE requires that sign-off is a formal, auditable event with documented evidence rather than an informal approval with no record.

MANAGE controls also require that AI system changes such as model updates, prompt changes, and integration expansions pass through formal change management. Agentic systems are sensitive to context shifts. Drift can arise from operational context changes rather than model updates; new integrations, user behaviors, or policy changes can shift risk exposure even when the underlying model remains unchanged. ARISE treats drift detection as a lifecycle control requirement, not a monitoring afterthought.

DETECT: Anomaly Detection as an AI-Specific Control

The DETECT domain, specifically the Anomaly Detection control (D.AD) within ARISE, addresses one of the most technically demanding challenges in agentic governance: distinguishing normal autonomous behavior from harmful autonomous behavior.

ARISE D.AD requires organizations to establish behavioral baselines for both systems and AI models, the ground truth against which deviations are measured. Detection methods must combine ML models, rules, and heuristics to surface not only security anomalies but ethical drift events such as shifts in model output bias. Detection alerts must map to documented incident playbooks, closing the operational gap between alert generation and response execution.

The most advanced requirement in D.AD is cross-domain anomaly correlation: the capacity to correlate a spike in outbound network traffic with a cluster of unusual model prompts to identify coordinated attacks spanning the network, application, and AI inference layers simultaneously. This capability is not standard SOC practice; it is an AI-specific detection posture that ARISE formalizes as a control requirement.

VALIDATE: No High-Risk AI Ships Without Evidence

The VALIDATE domain closes the governance loop by requiring that AI systems, especially high-risk deployments, pass structured validation protocols before production release.

ARISE VALIDATE controls require the definition of risk-gated go/no-go criteria linked to organizational risk appetite, with executive or governance approval required when thresholds are marginal. Validation must occur under production-like conditions, not in synthetic test environments. Operational trials must assess performance, safety, explainability, and human factors. Rollback paths and human-in-the-loop procedures must be validated, not assumed.This addresses a structural failure mode in current AI deployment practices. Validation protocols that stop at proof-of-concept performance do not meet the standard that regulators and auditors will apply to agentic systems. ARISE VALIDATE requires evidence of production readiness, not demonstration-environment readiness.

The Operationalization Imperative

The question facing technology and risk leaders is not whether agentic AI requires a new governance model. IBM identified that a robust operational framework for governance and lifecycle management is required for organizations deploying autonomous systems.4 The question is whether the governance model organizations build will produce real-time, auditable control over autonomous systems, or produce documentation that lags behind the deployments it is meant to govern.

Operationalizing AI governance means something specific. It is not writing policies, forming a committee, or publishing principles; each of these activities produces artifacts. Operationalization produces outcomes: measurable, auditable, enforceable outcomes that constrain AI system behavior and generate evidence that the constraints are working.

The distinction matters because most organizations have the former and believe they have the latter. That belief is the single largest governance risk in the enterprise AI stack today.

From Principle to Control

A governance principle states an intent. A control enforces it. The distance between those two things is where agentic AI incidents are currently occurring.

Consider a common organizational principle: “AI systems will operate within defined authority boundaries.” Without operationalization, that principle produces a policy document. With operationalization, it produces a structured set of controls: scoped agent permissions enforced at the integration layer, documented in the AI asset inventory, reviewed on a defined cadence, and tested through exercises that verify the boundaries hold. The principle is the same in both cases, the risk posture is not.

Operationalizing governance means translating every principle into a discrete, assignable, and testable control. Each control must answer four questions:

A governance program that cannot answer all four questions for each of its stated principles has governance documentation, not governance.

From Documentation to Evidence Generation

Audit-ready governance does not mean having documents. It means having evidence: records that demonstrate controls were operating at the time a decision was made, a system was deployed, or an agent took an action.

For agentic AI, evidence generation is particularly demanding. Agents act continuously, often across multiple systems simultaneously. A procurement agent processing invoices generates hundreds of discrete decisions per day, each representing a point of accountability. Effective operationalization means the governance architecture captures this activity automatically, without depending on human logging or manual review to create the audit trail.

ARISE controls address this through structured evidence requirements tied to each control domain. MANAGE controls require asset inventory records and change approval logs. DETECT controls require anomaly logs tied to incident playbooks. VALIDATE controls require acceptance records and validation protocols before any high-risk AI system reaches production. These are evidence obligations that govern what gets produced, retained, and reviewed; they are not documentation recommendations.

An organization that generates governance evidence automatically, through system design rather than manual process, can demonstrate assurance in real time. An organization relying on periodic audits and after-the-fact documentation cannot. In an environment where AI agents execute thousands of actions daily, the latter posture represents a material liability.

From Ownership in Theory to Accountability in Practice

Policy documents assign responsibility abstractly. Operationalization assigns it to named individuals with defined authority. This distinction is the difference between “the AI team is responsible” and a documented ownership record that names a specific individual as the designated owner of a specific deployed agent, defines their authority to approve high-impact actions, and establishes a documented escalation path for events exceeding defined thresholds.

The second version is functional accountability: the kind that survives an incident, a regulatory inquiry, or a board-level question. As IBM notes, when oversight moves from “human in the loop” to “human on the loop,” accountability does not disappear; it concentrates.4 ARISE MANAGE controls formalize this concentration by requiring named ownership for every deployed AI asset, explicit scope documentation for every integration point, and defined intervention authority for every agent workflow.

Organizations that have deployed agents without this structure are not ungoverned in theory. They are ungoverned in practice, because no specific individual has clear authority to stop a specific agent when it begins behaving outside its intended scope.

From Reactive Monitoring to Continuous Assurance

Trustible has observed a defining shift in enterprise AI governance maturity: AI governance is moving beyond simple intake forms toward active, lifecycle-based management that includes real-time monitoring and embedded controls.5 The questionnaire an AI project completes before deployment, was never comprehensive governance, it is simply a record of intent. Governance is what happens after deployment, continuously, for as long as the system operates.

Traditional security and compliance monitoring is event-driven. Something goes wrong; the team investigates. Compliance reviews happen annually and audits follow incidents. Agentic AI breaks this model because systems that act continuously at machine speed generate risk continuously. By the time a quarterly review surfaces a governance gap, that gap has been open for three months. By the time an annual audit identifies a misconfigured agent permission, the agent has made thousands of decisions under that configuration.

Operationalized governance means moving to replace reactive review cycles with continuous assurance architecture. Detection controls run against behavioral baselines in real time and drift detection monitors for changes in agent behavior that may indicate scope expansion, data access anomalies, or model degradation. Governance metrics must flow into executive dashboards, not only into audit binders. When a threshold is breached, the response must not wait for the next review cycle; it must trigger an incident playbook immediately.

This is the architecture that ARISE moves organizations toward. The DETECT domain’s anomaly detection controls, the MANAGE domain’s lifecycle and drift requirements, and the VALIDATE domain’s post-deployment monitoring windows together form a continuous assurance posture rather than a point-in-time compliance posture.

The Maturity Divide

Organizations are currently dividing into two groups. The first group has policies and believes governance is in place. The second group has controls, evidence, named owners, and continuous monitoring, and understands the difference between the two.

As AI deployment accelerates and regulatory exposure increases across the EU AI Act, CMMC, ISO 42001, and state-level frameworks, that divide will become a material risk differentiator. EY’s research on agentic AI governance identifies that as the pace of AI innovation accelerates, organizations require continuous governance updates and tailored oversight; static frameworks will not remain effective as agentic capabilities evolve.6 Regulators will not ask whether an organization has an AI governance policy. They will ask whether the organization can demonstrate that its AI systems operated within defined boundaries, that deviations were detected, that owners were accountable, and that remediation was documented.

The answer to those questions is not found in a policy document. It is found in the operational record, the evidence trail that governance either generates automatically or fails to generate at all.

Policy is not a control. Governance that cannot be demonstrated cannot be trusted. ARISE is built for organizations that understand the difference.

Assessed Intelligence delivers vCISO and vCRAIO leadership, ARISE Framework™ implementation, and continuous assurance through the OPERATE retainer. If your organization is deploying agentic AI and needs governance that operates at the speed of your systems, speak with an advisor.

References

- Isenberg, R. & Rahilly, L. (2026, March 5). Trust in the Age of Agents. McKinsey & Company. https://www.mckinsey.com/capabilities/risk-and-resilience/our-insights/trust-in-the-age-of-agents

- Anthropic. (2025, November). Disrupting the first reported AI-orchestrated cyber espionage campaign [Full report]. https://assets.anthropic.com/m/ec212e6566a0d47/original/Disrupting-the-first-reported-AI-orchestrated-cyber-espionage-campaign.pdf

- Ahmed, S.Q. (2025, July 17). Agentic AI: A Governance Wake-Up Call. Directorship Magazine, National Association of Corporate Directors (NACD) / Infosys. https://www.nacdonline.org/all-governance/governance-resources/directorship-magazine/online-exclusives/2025/q3-2025/autonomous-artificial-intelligence-oversight/

- Boinodiris, P. & Parker, J. (2025, November). The Evolving Ethics and Governance Landscape of Agentic AI. IBM Think Insights. https://www.ibm.com/think/insights/ethics-governance-agentic-ai

- Trustible. (2026, January 7). Trustible’s 2026 AI Predictions. Trustible Newsletter. https://insight.trustible.ai/p/trustibles-2026-ai-predictions

- Zhu, Y. & Cobey, C. (2026, January 23). Six Steps to Enhance Governance and Increase Agentic AI’s Value. EY Canada, Technology Risk.https://www.ey.com/en_ca/insights/assurance/technology-risk/six-steps-to-enhance-agentic-ai-governance